HOME

Sign up for your ELTBOOKS account now, or when you checkout.

Already a customer? Click

here.

Sign up for your ELTBOOKS account now, or when you checkout.

Already a customer? Click

here.

About Us

Welcome to ELTBOOKS.com!

ELTBOOKS.com is Japan's specialist ELT (English Language Teaching) book service for English teachers, schools and colleges. We provide a flat discount of 20% off all books from all Western publishers who sell and keep stock of their books in Japan (Pearson, Oxford, Cengage, e-future, Cambridge, Macmillan, etc.). We are located in Shibuya-ku, Tokyo, and our team is dedicated to bringing you the best possible customer experience -- and prices!

To find out how to order books from us, please click here. And check out our FAQ here.

Please note that in order to maintain a high quality of service for every customer we only handle inquiries by email and we only sell books that are listed on the website.

We look forward to helping you get the best books for your students at the best prices.

Readers Ranking

| 1 |  |

Football Forever (Level 1) Oxford University Press |

|---|

| 2 |  |

Where Is It? (Level 1) Oxford University Press |

|---|

| 3 |  |

Doctor, Doctor (Starter) Oxford University Press |

|---|

| 4 |  |

Lost! (Level 2) Oxford University Press |

|---|

| 5 |  |

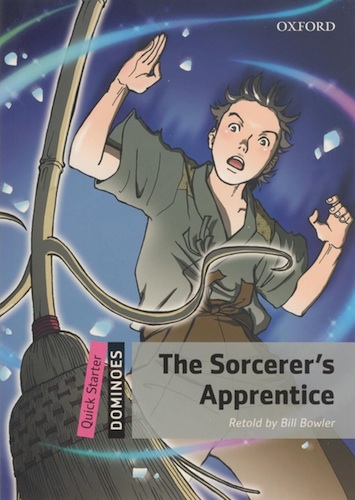

The Sorcerer's Apprentice (Quick Starters) Oxford University Press |

|---|

Contact Us

Please contact us via our contact form if you have any questions!

Proud to Support...

We are proud to support the following organization through charitable donations:

|